Introduction to GenAI and Prompt Engineering

I am Developer, Artist and trying my luck on blogging as well. Well I am Ambitious, Passionate towards Learning, The Night Owl, And I Like challenges...

What is GenAI and Why Does Prompting Matter?

If you’ve ever wondered how people interact with AI tools, or why some folks seem to get exactly what they want from tools like ChatGPT while others receive confusing or generic responses, this article is for you. We’ll unveil the basics of Generative AI (GenAI), teach you about prompts, and set you up for success in the art of communicating with AI—no matter your background.

What is GenAI? Breaking Down the Buzzword

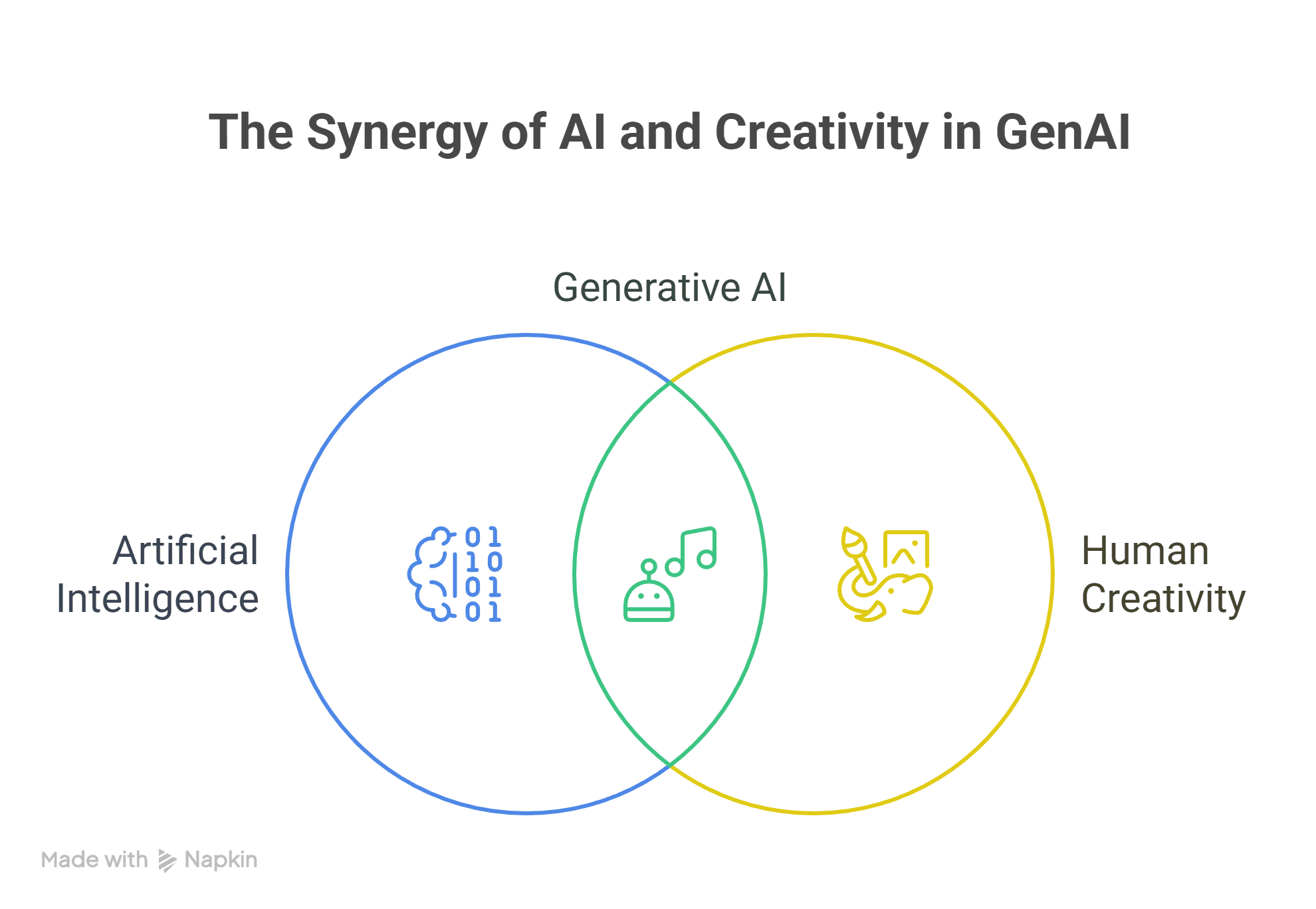

At its core, GenAI stands for Generative Artificial Intelligence. It’s not just another tech industry fad—it signifies a quantum leap in how computers can support us, work with us, and even create for us.

GenAI in Plain English

Think of GenAI as a highly intelligent assistant, one who doesn’t just fetch information, but who actually creates content. Whether you want it to write a story, summarize a report, sketch a logo, or compose a melody, GenAI listens to (or rather, “reads”) your instructions and produces something new. It doesn’t simply retrieve facts; it uses patterns learned from mountains of information to generate original outputs.

Real-Life Example: The Coffee Shop Scenario

Let’s put GenAI into a real-world situation you already know. Picture yourself at your favorite coffee shop:

You say: “One cappuccino, please.”

- The barista crafts your drink exactly to your taste.

But what if you’re less specific?

You say: “Give me something hot.”

- The barista could hand you tea, cocoa, an espresso… it’s anyone’s guess!

This is exactly how GenAI works: the clarity and detail of your request (or prompt) directly influences the success and accuracy of what you get back.

What is a Prompt? Your “Magic Wand” for GenAI

A prompt is what you say or write to “instruct” the AI. It’s your command, your wish, your ask—wrapped up in a short phrase, a question, or even a detailed scenario.

Prompts can be simple:

- “Summarize this article.”

Or complex:

- “Write a friendly email to my team, summarizing our project progress, highlighting Sarah’s achievement, and suggesting a virtual celebration.”

Analogy: The Genie in the Lamp

Recall fairy tales about genies who grant wishes:

Vague wish: “I want to be happy.”

Specific wish: “I want to have a picnic in Central Park this Saturday with my friends, sunny weather, and no bugs.”

The second wish is much more likely to lead to the outcome you desire. Similarly, your prompt is how you “wish” something out of GenAI—the clearer, the better.

Why Should You Care About Prompts?

Learning to use the right prompt is like finding the magic key that unlocks GenAI’s full potential. Good prompts can help you:

Produce emails, reports, social posts, or creative writing.

Analyze data, summarize complex information, or brainstorm fresh ideas.

Automate repetitive tasks, create images or code snippets, and more.

Everyday Example: Email to Your Boss

Let’s make this practical:

Poor prompt:

“Help me.”

- (GenAI: “How can I help you?”)

Better prompt:

“Please write me an email to my boss explaining I’m sick and can’t come to work.”

- (GenAI: Produces a clear, professional message ready to send!)

Takeaway: The results improve dramatically when you put in a little thought, just like how your experience at a restaurant improves when you’re clear with your order.

More Real-World Examples Across Fields

Let’s broaden this with practical examples:

Marketing:

- “Create three catchy headlines for a spring sale on winter clothing.”

Education:

- “Explain the theory of relativity in simple terms for a 10-year-old.”

Healthcare:

- “Summarize this patient’s medical history in three sentences.”

Design:

- “Generate a logo concept for a modern vegan café, using green and white.”

Programming:

- “Write a Python script that sorts a list of names alphabetically.”

In each case, the clarity, context, and specificity of your prompt drives the quality of GenAI's response.

Behind the Scenes: How Does GenAI Actually Work?

While Generative AI can feel magical—responding instantly and intelligently to your prompts—what’s happening under the hood is a sophisticated blend of computer science, mathematics, and large-scale data engineering. Below, I’ll break down each key stage and component, using clear explanations and practical analogies to help you visualize what’s really going on during a typical GenAI interaction.

1. Pre-Training: Building the AI Brain

Massive Data Ingestion:

GenAI models like GPT-4 are “pre-trained” on enormous datasets scraped from the internet, books, articles, code, images, and more.

Data is tokenized (broken into small chunks), and each token is mapped to a numerical value that the model can manipulate.

Self-Supervised Learning:

During training, the model is shown billions of sequences (sentences, code, etc.) and learns to predict the next token in a sequence.

No human labels are required here—the model learns by trying to guess missing words or next words, seeing where it went wrong, and updating itself.

Emergence of Patterns:

Over time, the neural network captures intricate relationships in language: grammar rules, contextual clues, cultural references, and even some logic.

Weights and biases in the model are gradually tuned so that it gets better and better at predicting what comes next in any given context.

2. Prompt Processing: Interpreting Your Input

Tokenization:

When you type a prompt, it’s instantly split into tokens (words, subwords, or even characters, depending on the model’s design).

Example: “Write a summary of Hamlet” → [‘Write’, ‘a’, ‘summary’, ‘of’, ‘Ham’, ‘let’]

Embeddings:

Each token is converted into a high-dimensional vector (embedding) that captures its semantic meaning.

These vectors provide the foundation for all subsequent computations.

Neural Network Layers:

The sequence of embeddings flows through dozens or even hundreds of transformer layers.

Each layer adjusts its understanding by considering the position and context of each token within the entire prompt (“attention mechanisms”).

These attention maps allow the AI to weigh meaning and relationships, just as a human would focus on key words in a sentence for overall meaning.

3. Token Prediction: Generating the Output

Stepwise Generation:

The model doesn’t generate your answer all at once. Instead, it predicts one token at a time.

After each token is predicted, the new token is appended to the prompt, and the model recalculates the probabilities for what should come next.

Probability Distribution:

For every step, the AI constructs a probability distribution over its entire vocabulary, assigning a likelihood to each possible next token.

The final output is selected via “sampling”—potentially influenced by parameters like temperature.

Temperature (Creativity Control):

Lower temperature = safer, more repetitive/accurate answers

Higher temperature = more creative and surprising outputs, but sometimes less precise.

Example:

If you prompt, “Once upon a time in a faraway kingdom,” the model scores all likely next words: ‘there’, ‘a’, ‘lived’, etc.—and picks the most probable, or sometimes a slightly less probable one if sampling is used.

4. Advanced Features: Context Window, Memory, and Roles

Context Window:

Each model has a “context window” (e.g., 8,000 or even 128,000 tokens), which is the maximum amount of input and output it can consider at once.

Input outside this window is forgotten, so precise or recent information is prioritized.

Conversation Memory:

Some advanced models can hold onto information across turns in a conversation using session memory.

This allows for coherent responses even when context is spread over several exchanges.

System and Role Prompts:

You can set context or roles (e.g., “Act as a financial analyst”) to steer the AI’s tone, expertise, or style throughout the conversation.

These system prompts act as invisible guide rails, subtly influencing the AI’s output.

5. End-to-End Example

Let’s tie it all together with a simple walk-through:

Prompt Submitted: “Explain quantum computing to a 10-year-old.”

Tokenization: Prompt broken into manageable tokens.

Embedding & Encoding: Each token mapped into a vector; processed by the transformer network.

Attention & Context: Model pays special attention to key concepts (“quantum computing”, “10-year-old”).

Stepwise Prediction: AI predicts, generates, and appends each new token until the sentence is finished.

Output Displayed: The generated text appears on your screen—reading like an expert’s explanation, but tailored to a child’s understanding.

Technical Example

Suppose you prompt:

“Write a SQL query to select the top 5 products by sales from orders table.”

Behind the curtain:

The AI parses the request, recognizes technical keywords (SQL, select, top, sales, orders table), and assembles a likely correct statement from millions of similar examples in its training data.

It will output:

SELECT product_id, SUM(sales) AS total_sales FROM orders GROUP BY product_id ORDER BY total_sales DESC LIMIT 5;You didn’t have to code from scratch—the prompt did the heavy lifting!

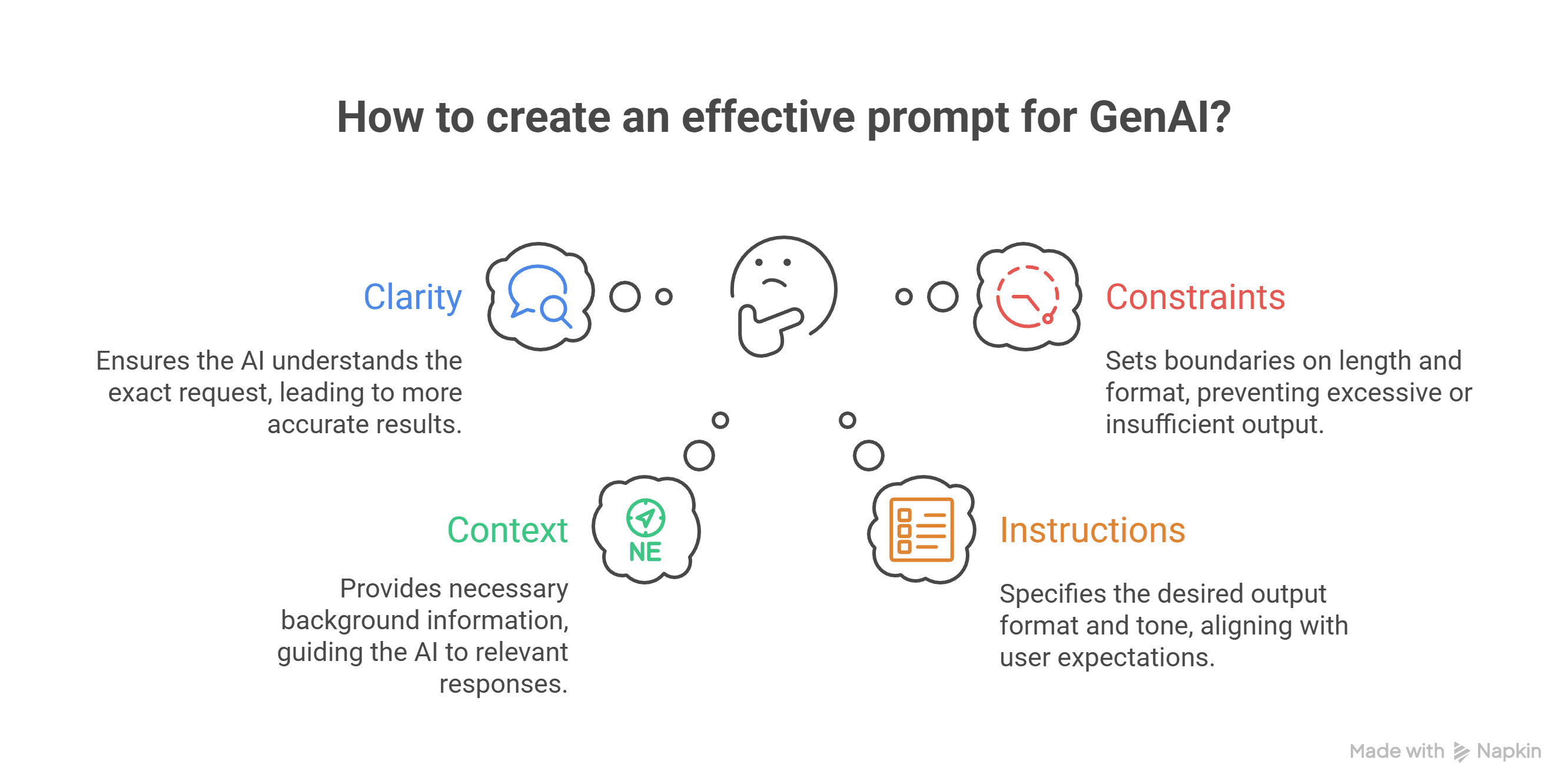

Tips for Writing Great Prompts (and Avoiding Frustration)

Be Clear and Direct: State exactly what you want, with as much relevant detail as necessary.

Define Role or Tone: If you want a friendly email, say so. If you want a technical explanation, specify the audience.

Use Examples: If appropriate, share a template or sample within your prompt.

Iterate: If you don’t get what you want the first time, tweak your prompt and try again—like clarifying your order at a café.

Why Prompt Engineering is the “New Literacy”

In many ways, prompt engineering is becoming a vital skill—like reading or basic computer literacy. The more you practice, the more powerful and precise your interactions with AI will become.

Students can leverage AI for studying and homework help.

Professionals speed up research, drafting, analytics, and more.

Creatives expand their toolkit, generating fresh ideas at the push of a button.

Prompt engineering isn’t about “talking to robots”—it’s about shaping technology so it truly works for you.