Understanding Large Language Models: What You Need to Know

I am Developer, Artist and trying my luck on blogging as well. Well I am Ambitious, Passionate towards Learning, The Night Owl, And I Like challenges...

Post #2 in the Complete Prompt Engineering Series

Welcome back! In What is Prompt Engineering? A Complete Introduction, you learned what prompt engineering is and why it matters. Now we're going deeper: understanding the engine under the hood. You don't need to become a machine learning engineer, but understanding how LLMs work will transform how you prompt them.

Why Understanding LLMs Makes You a Better Prompt Engineer

Here's a question: Would you be a better driver if you understood how an engine works? Maybe, maybe not—but you'd definitely be better at diagnosing problems and maximizing performance.

The same applies to prompt engineering.

When you understand:

Why temperature settings change output personality

How tokens affect your costs and results

Why context windows are your biggest constraint

What makes different models excel at different tasks

...you stop guessing and start engineering.

This post will give you that X-ray vision. By the end, when an AI gives you an unexpected response, you'll know exactly why—and how to fix it.

Part 1: How Large Language Models Actually Work (The Simplified Truth)

The Core Concept: Statistical Prediction at Scale

Let's start with a truth that changes everything:

Large Language Models don't "understand" language. They predict it.

Imagine you're playing a game where I say: "The cat sat on the..."

Your brain instantly suggests: mat, chair, windowsill, table—all reasonable completions. That's essentially what an LLM does, except:

It analyzes trillions of word patterns from its training data

It calculates the probability of each possible next word

It selects based on those probabilities (modified by parameters we'll discuss)

It repeats this process word by word until the response is complete

Key insight: Every word you see from an AI is a prediction based on:

The patterns it learned during training

Your prompt (the context you provided)

The parameters you've set (temperature, etc.)

The Transformer Architecture: The Breakthrough That Changed Everything

In 2017, Google researchers published a paper called "Attention is All You Need" that introduced the Transformer architecture. This was the earthquake that created the AI revolution we're experiencing.

What made it revolutionary?

Before Transformers: Sequential Processing

Older models (RNNs, LSTMs) had to process text sequentially—one word at a time, left to right. This meant:

❌ Slow processing

❌ Limited context understanding

❌ Difficulty with long-range dependencies

❌ Couldn't parallelize (use multiple processors simultaneously)

Example problem:

In the sentence "The chef who trained in France and Italy and worked at multiple Michelin-starred restaurants opened his new restaurant," the word "his" refers back to "chef"—but there are 15 words in between. Old models struggled with these connections.

The Transformer Revolution: Attention Mechanism

Transformers introduced self-attention, which allows the model to:

✅ Look at all words simultaneously

✅ Understand relationships between any words in the input

✅ Process in parallel (much faster)

✅ Handle long-range dependencies effectively

The "Attention" mechanism answers: "When processing this word, which other words in the sequence should I pay attention to?"

Visual analogy:

Old way: Reading a book by looking at one word at a time through a tiny hole

Transformer way: Seeing the entire page at once and understanding how every word relates to every other word

The Three Components of a Transformer

1. Input Embeddings

Your text is converted into numerical vectors (we'll cover this in tokenization)

These vectors capture semantic meaning: "king" - "man" + "woman" ≈ "queen"

2. Encoder-Decoder Architecture (or Decoder-Only)

Encoder: Processes the input and creates a rich representation

Decoder: Generates the output based on that representation

Modern LLMs (GPT series, LLaMA) use decoder-only architecture for generation tasks

3. Attention Layers (The Magic)

Multiple layers of attention mechanisms

Each layer learns different patterns: grammar, facts, reasoning, style, etc.

GPT-4 has 120+ layers; Claude 3.5 has similar complexity

Training: How Models Learn

Phase 1: Pre-training (The Foundation)

The model reads massive amounts of text from the internet and learns to predict the next word.

Scale we're talking about:

GPT-3: Trained on ~45TB of text (300 billion tokens)

GPT-4: Estimated 10-20 trillion tokens

LLaMA 2: 2 trillion tokens

Claude 3: Estimated similar or greater scale

What it learns:

Language patterns and grammar

Factual knowledge (though imperfectly)

Reasoning patterns

Common sense associations

Cultural and domain knowledge

Unfortunately, also biases present in training data

Training objective: Given this sequence of words, predict the next one. Repeat billions of times across trillions of words.

Phase 2: Fine-Tuning (Specialization)

After pre-training, models undergo additional training:

a) Supervised Fine-Tuning (SFT)

Human-written examples of good responses

Teaches the model to follow instructions

"When someone asks X, respond like Y"

b) Reinforcement Learning from Human Feedback (RLHF)

Humans rank multiple model outputs

Model learns which responses humans prefer

This is why ChatGPT and Claude are so much better at conversation than raw GPT-3

The result: A model that can follow complex instructions, maintain context, and generate human-like text.

Parameters: The Model's "Memory"

You've probably heard models described by their parameter count: "GPT-4 has 1.7 trillion parameters!"

What are parameters?

Think of them as the model's "knowledge weights"—the numerical values that determine how the model processes and generates text.

Model sizes:

GPT-3: 175 billion parameters

GPT-4: ~1.7 trillion parameters (estimated, 8 expert mixture)

Claude 3 Opus: Estimated ~500B-1T parameters

LLaMA 2 70B: 70 billion parameters

Gemini Ultra: Estimated 1.5T+ parameters

Bigger = better? Usually, but...

✅ More parameters = more knowledge capacity

✅ Better reasoning and nuance

❌ Much more expensive to run

❌ Slower response times

❌ Not always necessary for simpler tasks

Practical implication: A 70B open-source model might be perfect for your use case, saving you 90% in costs compared to GPT-4.

Why This Matters for Prompting

Understanding this architecture explains:

1. Why context order matters

The model processes your prompt sequentially (even though attention is parallel). Information later in your prompt gets more "attention."

Practical tip: Put the most important instructions near the end of your prompt.

2. Why the model can be confident yet wrong

It's predicting based on patterns, not retrieving facts from a database. High probability ≠ factually correct.

Practical tip: Request citations, use chain-of-thought prompting, verify critical information.

3. Why examples are so powerful

Examples directly influence the probability distribution for the next tokens.

Practical tip: Few-shot prompting works because you're literally showing the model the pattern you want.

4. Why some tasks are harder than others

Complex reasoning requires the model to maintain and manipulate abstract representations across many steps.

Practical tip: Break complex tasks into smaller steps (prompt chaining).

Part 2: Tokenization—The Hidden Language of AI

Here's something that will change how you write prompts forever: AI doesn't see words. It sees tokens.

What Are Tokens?

Tokens are the basic units of text that models process. They're not exactly words, not exactly characters—they're subword units.

The rough rule: 1 token ≈ 4 characters or ≈ 0.75 words in English

Examples:

Unknown"Hello world!" = 3 tokens ["Hello", " world", "!"]

"artificial intelligence" = 4 tokens ["art", "ificial", " intelligence"]

"ChatGPT" = 2 tokens ["Chat", "GPT"]

"antidisestablishmentarianism" = 6 tokens ["ant", "idis", "establish", "ment", "arian", "ism"]

Why not just use words?

Many languages don't have clear word boundaries (Chinese, Japanese)

Tokenization handles new/rare words better

More efficient processing

Handles numbers, punctuation, code, etc.

How Tokenization Works

Step 1: Byte-Pair Encoding (BPE)

The most common approach. The algorithm:

Starts with individual characters

Finds the most frequently occurring pairs

Merges them into single tokens

Repeats until reaching the desired vocabulary size

Result: Common words = 1 token, rare words = multiple tokens

Vocabulary sizes:

GPT-3/4: ~50,257 tokens

Claude: ~100,000 tokens

LLaMA: ~32,000 tokens

Why Tokenization Matters for You

1. Cost Calculation

API pricing is per token, not per word:

OpenAI GPT-4: $0.03/1K input tokens, $0.06/1K output tokens

Claude Opus: $0.015/1K input tokens, $0.075/1K output tokens

Your 100-word prompt isn't 100 tokens—it's more like 130-150 tokens.

Pro tip: Use tokenization tools to check:

2. Context Window Limits

Models have token limits, not word limits:

GPT-4: 8K, 32K, or 128K tokens

Claude 3.5: 200K tokens

Gemini 1.5 Pro: 1M tokens

Common mistake: "I can fit 100,000 words in Claude!"

Reality: 100,000 words = ~130,000-150,000 tokens—you'd exceed the limit.

3. Token Efficiency

Some ways of writing use fewer tokens:

Example:

Unknown❌ Inefficient (many tokens):

"The individual who is responsible for the management and oversight of..."

✅ Efficient (fewer tokens):

"The manager of..."

But don't obsess over this. Clarity trumps token optimization except at massive scale.

4. Why Some Words Are "Harder" Than Others

Words that tokenize into more pieces are harder for the model:

Easy (1 token): "the", "and", "computer", "science"

Harder (multiple tokens): "antidisestablishmentarianism", rare names, specialized jargon

This is why:

Models sometimes misspell unusual names

They struggle with very rare technical terms

They're better with common vocabulary

5. Numbers and Code

Numbers tokenize unpredictably:

Unknown"1234" might be ["123", "4"] or ["12", "34"] or ["1", "2", "3", "4"]

This is why LLMs are bad at arithmetic—they're predicting token sequences, not calculating.

Code tokenizes differently than prose:

Pythondef calculate_total(items):

return sum(items)

This might be 15-20 tokens, depending on how the tokenizer handles code syntax.

Practical Tokenization Tips for Prompting

1. Check your token count before submission

Especially for long prompts approaching context limits.

2. Be aware of token-heavy formats

JSON (lots of special characters)

Tables (formatting characters)

Code (syntax elements)

3. Don't pad unnecessarily

This doesn't help and wastes tokens: "Please, if you could, maybe, possibly..."

4. Use the model's native token count in API calls

Most APIs return usage.total_tokens—monitor this for cost tracking.

Part 3: Context Windows and Their Limitations

The context window is the total amount of text (in tokens) a model can consider at once—including both your prompt and its response.

Think of it as the model's "working memory."

Current Context Window Sizes

| Model | Context Window | Practical Capacity |

| GPT-3.5 Turbo | 16K tokens | ~12,000 words |

| GPT-4 | 8K tokens | ~6,000 words |

| GPT-4 (extended) | 32K tokens | ~24,000 words |

| GPT-4 Turbo | 128K tokens | ~96,000 words |

| Claude 3.5 Sonnet | 200K tokens | ~150,000 words |

| Claude 3 Opus | 200K tokens | ~150,000 words |

| Gemini 1.5 Pro | 1M tokens | ~750,000 words |

| LLaMA 3 | 8K-32K tokens | ~6,000-24,000 words |

The race is on: Companies are competing to offer larger context windows.

Why Context Windows Matter

1. They Define What You Can Input

Want to analyze an entire book? You need:

"The Great Gatsby" = ~47,000 words = ~63,000 tokens

Required: 128K+ context window

2. They Include the Response

If you have 8K tokens total:

Your prompt uses 6K tokens

Only 2K tokens remain for the response

That's ~1,500 words maximum output

Common issue: Long prompts with insufficient room for responses.

3. They Affect Attention Quality

Lost in the Middle Problem: Research shows models pay more attention to:

The beginning of the context

The end of the context

Less attention to information in the middle

Study findings (Liu et al., 2023):

Information at the start: ~70% retrieval accuracy

Information in the middle: ~40% retrieval accuracy

Information at the end: ~65% retrieval accuracy

Practical implication: Put critical information at the beginning or end of your prompt.

Context Window Limitations

1. Processing Time

Larger contexts = longer processing:

8K tokens: ~2-5 seconds

128K tokens: ~10-30 seconds

1M tokens: Several minutes

2. Cost

You pay for every token in the context:

UnknownExample: Analyzing a 100K token document

Input cost: 100K tokens × $0.015/1K = $1.50 per query

If you query 1,000 times: $1,500

3. Quality Degradation

Models perform worse as context windows fill:

Needle-in-haystack tests show accuracy drops significantly beyond 50% capacity

Complex reasoning degrades faster than simple retrieval

4. The Recency Bias

Models weight more recent information higher. In long contexts:

Earlier information may be "forgotten"

Later information dominates

Strategies for Working Within Context Limits

1. Summarization

UnknownStep 1: Summarize document in chunks

Step 2: Combine summaries

Step 3: Analyze final summary

2. Sliding Window

Process text in overlapping chunks:

UnknownChunk 1: Tokens 0-8000

Chunk 2: Tokens 6000-14000

Chunk 3: Tokens 12000-20000

3. Retrieval-Augmented Generation (RAG)

Don't put everything in context—retrieve only relevant sections:

Unknown1. Index all documents

2. User asks question

3. Retrieve top 5 relevant passages

4. Feed only those to the model

We'll cover this in detail in Post #18.

4. Prompt Compression

Remove unnecessary verbosity:

Unknown❌ "I would really appreciate it if you could possibly help me understand..."

✅ "Explain..."

5. Stateful Conversations

For chatbots, summarize history periodically:

UnknownEvery 10 messages:

- Summarize conversation so far

- Replace old messages with summary

- Continue with compressed context

The Future of Context Windows

Trends to watch:

Infinite context: Research into models without fixed context limits

Hierarchical attention: Models that process different context levels differently

External memory: Models that can read/write to external storage

Selective attention: Models that automatically focus on relevant parts

But for now: Work within the constraints. They're getting better, but they're not going away soon.

Part 4: Parameters That Control Output (Temperature, Top-p, and More)

These are the knobs and dials that change how the AI generates text. Understanding them is like understanding shutter speed and aperture in photography—technical knowledge that unlocks creative control.

Temperature: The Creativity Dial

Definition: Controls randomness in token selection. Range: 0.0 to 2.0 (practical range: 0.0 to 1.5)

How it works:

The model calculates probabilities for all possible next tokens:

Unknown"The sky is ____"

- blue: 40% probability

- clear: 25% probability

- cloudy: 20% probability

- falling: 0.1% probability

Temperature modifies these probabilities:

Temperature = 0 (Deterministic)

Always picks the highest probability token

Same prompt = same response every time

Maximum consistency, zero creativity

Temperature = 0.7 (Default/Balanced)

Mostly picks high-probability tokens

Some variation allowed

Balance of consistency and creativity

Temperature = 1.5 (High Creativity)

Flattens probability distribution

Low-probability tokens get more chances

More creative, more unpredictable

Higher risk of nonsense

Visual representation:

UnknownLow temp (0.2): ████████ blue

██ clear

█ cloudy

High temp (1.5): ████ blue

███ clear

██ cloudy

█ falling (now more likely!)

When to Use Different Temperatures

Temperature 0 - 0.3: Maximum Precision

✅ Factual Q&A

✅ Code generation

✅ Data extraction

✅ Classification tasks

✅ Legal/medical applications

✅ Anything requiring consistency

Example prompt:

UnknownTemperature: 0

Extract the following from this email: sender, date, main request, urgency level.

Temperature 0.7 - 1.0: Balanced (Default)

✅ General conversation

✅ Explanations

✅ Content writing

✅ Problem-solving

✅ Most use cases

Example prompt:

UnknownTemperature: 0.7

Write a professional email declining a meeting request.

Temperature 1.0 - 1.5: Maximum Creativity

✅ Creative writing

✅ Brainstorming

✅ Unique content generation

✅ Exploring unconventional ideas

❌ Not for factual accuracy

Example prompt:

UnknownTemperature: 1.3

Generate 20 unusual marketing campaign ideas for a artisanal pickle company.

Temperature 1.5 - 2.0: Experimental

Often produces nonsensical or incoherent results

Rarely useful in practice

Fun for experimentation

Top-p (Nucleus Sampling): The Alternative Control

Definition: Instead of temperature, controls which tokens are considered by cumulative probability. Range: 0.0 to 1.0

How it works:

Top-p = 0.9 means "consider tokens until their cumulative probability reaches 90%"

UnknownToken probabilities:

- blue: 40% (cumulative: 40%)

- clear: 25% (cumulative: 65%)

- cloudy: 20% (cumulative: 85%)

- bright: 10% (cumulative: 95%) ← stops here at top-p=0.9

- falling: 5% (excluded)

The difference from temperature:

Temperature: Adjusts ALL probabilities

Top-p: Excludes low-probability tokens entirely

Typical values:

Top-p = 0.1: Very conservative (top 10% of probability mass)

Top-p = 0.5: Moderately conservative

Top-p = 0.9: Balanced (default for many models)

Top-p = 1.0: Consider all tokens (no filtering)

Pro tip: Use EITHER temperature OR top-p, not both. Combining them can produce unexpected results.

Other Important Parameters

Max Tokens (Max Length)

Definition: Maximum number of tokens in the response.

Use cases:

Controlling costs (shorter = cheaper)

Enforcing brevity

Ensuring responses fit in UI elements

Common mistake:

Unknown❌ Setting max_tokens = 50 and asking for a detailed essay

Result: Response will be cut off mid-sentence

Best practice:

Unknown✅ Set max_tokens to slightly more than expected output

For 500-word response: max_tokens = 700-800

Frequency Penalty

Definition: Reduces likelihood of repeating the same tokens. Range: -2.0 to 2.0

How it works:

0: No penalty (default)

Positive values (0.5 - 1.0): Discourages repetition

Negative values: Encourages repetition (rarely useful)

Use when:

Model is being repetitive

You want more diverse vocabulary

Generating lists or variations

Example:

UnknownWithout frequency penalty:

"The product is great. The product is amazing. The product is fantastic."

With frequency penalty (0.7):

"The product is great. It's amazing. This is fantastic."

Presence Penalty

Definition: Encourages the model to introduce new topics. Range: -2.0 to 2.0

How it works:

0: No penalty (default)

Positive values: Encourages talking about new concepts

Negative values: Encourages staying on topic (rarely used)

Use when:

Model keeps circling back to same points

You want broader coverage

Brainstorming diverse ideas

The difference:

Frequency penalty: "Don't use the same WORDS repeatedly"

Presence penalty: "Don't talk about the same TOPICS repeatedly"

Stop Sequences

Definition: Tokens that signal the model to stop generating.

Common use:

Pythonstop_sequences = ["\n\n", "###", "END"]

Use cases:

Generating until specific delimiter

Stopping at natural breakpoints

Controlling output format

Example:

UnknownPrompt: "List 5 ideas. Use ### to separate each idea."

Stop sequence: "###"

Output: "Idea 1: Product launch ###"

(stops, preventing premature continuation)

Parameter Combinations for Common Tasks

1. Factual Q&A

JSON{

"temperature": 0.1,

"max_tokens": 500,

"top_p": 0.9,

"frequency_penalty": 0,

"presence_penalty": 0

}

2. Creative Writing

JSON{

"temperature": 1.0,

"max_tokens": 2000,

"top_p": 0.95,

"frequency_penalty": 0.5,

"presence_penalty": 0.3

}

3. Code Generation

JSON{

"temperature": 0.2,

"max_tokens": 1500,

"top_p": 0.9,

"frequency_penalty": 0.2,

"presence_penalty": 0

}

4. Brainstorming

JSON{

"temperature": 1.2,

"max_tokens": 1000,

"top_p": 0.95,

"frequency_penalty": 0.8,

"presence_penalty": 0.6

}

5. Conversation

JSON{

"temperature": 0.7,

"max_tokens": 800,

"top_p": 0.9,

"frequency_penalty": 0.3,

"presence_penalty": 0.1

}

Experimentation Framework

To find optimal parameters:

Start with defaults (temp: 0.7, top_p: 0.9)

Test one variable at a time

Run multiple times (at temp > 0, outputs vary)

Measure results against your criteria

Document what works

Pro tip: Create a spreadsheet tracking:

| Task | Temperature | Top_p | Max_tokens | Quality (1-10) | Notes |

Part 5: Different Model Architectures (GPT, Claude, Gemini, LLaMA, and More)

Not all LLMs are created equal. Understanding the landscape helps you choose the right tool for the job.

The Major Players: A Comparative Overview

OpenAI GPT Series

GPT-3.5 Turbo

Size: 175B parameters

Context: 16K tokens

Strengths: Fast, cheap, good for most tasks

Weaknesses: Less capable than GPT-4, more hallucinations

Best for: High-volume, cost-sensitive applications

Cost: $0.0015/1K input, $0.002/1K output

GPT-4

Size: ~1.7T parameters (mixture of experts)

Context: 8K, 32K, or 128K tokens

Strengths: Best reasoning, complex tasks, instruction following

Weaknesses: Expensive, slower

Best for: Complex analysis, high-stakes content, reasoning

Cost: $0.03/1K input, $0.06/1K output

GPT-4 Turbo

Updated GPT-4 with better performance and longer context

Context: 128K tokens

Cost: Slightly cheaper than GPT-4

GPT-4V (Vision)

Multimodal: text + images

Can analyze screenshots, diagrams, photos

Same core capabilities as GPT-4

Anthropic Claude Series

Claude 3 Haiku

Size: Smallest in Claude 3 family

Context: 200K tokens

Strengths: Fastest, cheapest Claude, massive context

Best for: Simple tasks needing large context

Cost: $0.00025/1K input, $0.00125/1K output

Claude 3 Sonnet

Size: Mid-tier

Context: 200K tokens

Strengths: Balance of capability and cost

Best for: Most production use cases

Cost: $0.003/1K input, $0.015/1K output

Claude 3.5 Sonnet

Updated Sonnet with improved performance

Better at: Coding, reasoning, nuanced tasks

Context: 200K tokens

Claude 3 Opus

Size: Largest Claude model

Context: 200K tokens

Strengths: Highest quality, excellent at complex reasoning

Best for: Challenging tasks, long-document analysis

Cost: $0.015/1K input, $0.075/1K output

Claude's Unique Characteristics:

✅ Constitutional AI (safety-focused training)

✅ Better at declining inappropriate requests

✅ Excellent at long-form analysis

✅ Strong performance on nuanced tasks

✅ Less verbose than GPT-4 (more concise)

Google Gemini Series

Gemini 1.0 Pro

Context: 32K tokens

Strengths: Multimodal (text, images, audio, video)

Best for: Applications needing multiple input types

Gemini 1.5 Pro

Context: 1M tokens (largest available)

Strengths: Analyzing entire codebases, books, video transcripts

Weaknesses: Long context = slower, expensive

Best for: Tasks requiring massive context

Gemini Ultra

Largest Gemini model

Competitive with GPT-4 and Claude 3 Opus

Multimodal capabilities

Gemini's Unique Characteristics:

✅ Native multimodal design (not bolted-on vision)

✅ Longest context window (1M tokens)

✅ Strong at technical/scientific tasks

✅ Deep Google integration

Meta LLaMA Series

LLaMA 2

Sizes: 7B, 13B, 70B parameters

License: Open source (with usage restrictions)

Context: 4K-32K tokens depending on version

Strengths: Can self-host, customize, fine-tune

Best for: Privacy-sensitive applications, customization needs

LLaMA 3

Improved over LLaMA 2

Better multilingual support

Enhanced reasoning

Open Source Advantages:

✅ Self-host (data stays internal)

✅ Fine-tune for specific domains

✅ No per-token costs (just compute)

✅ Full control over model behavior

Open Source Disadvantages:

❌ Requires infrastructure

❌ Need ML expertise for deployment

❌ Generally less capable than frontier models

❌ You handle all safety/moderation

Other Notable Models

Mistral AI

Mistral 7B: Efficient, competitive with 13B models

Mixtral 8x7B: Mixture of experts, strong performance

Open source: Similar benefits to LLaMA

Cohere

Command: Optimized for business applications

Strong at: Classification, embeddings, search

AI21 Jurassic

Jurassic-2: Various sizes

Focus: Multi-language, long-form content

Specialized Models Worth Knowing

Code-Specific Models

CodeLlama (Meta)

Based on LLaMA, trained on code

Better at programming than base LLaMA

StarCoder

Open source, 15B parameters

Trained on 80+ programming languages

Phind-CodeLlama

- Fine-tuned CodeLlama for development

Embedding Models

OpenAI text-embedding-ada-002

For semantic search, clustering

1536 dimensions

Cohere Embed

Multilingual embeddings

Various size options

Sentence Transformers

Open source embedding models

Self-hostable

Model Comparison Matrix

| Feature | GPT-4 | Claude 3 Opus | Gemini Ultra | LLaMA 2 70B |

| Reasoning | ★★★★★ | ★★★★★ | ★★★★☆ | ★★★☆☆ |

| Coding | ★★★★★ | ★★★★★ | ★★★★☆ | ★★★☆☆ |

| Creative Writing | ★★★★★ | ★★★★★ | ★★★★☆ | ★★★★☆ |

| Long Context | ★★★★☆ | ★★★★★ | ★★★★★ | ★★☆☆☆ |

| Speed | ★★★☆☆ | ★★★★☆ | ★★★☆☆ | ★★★★★ |

| Cost Efficiency | ★★☆☆☆ | ★★★☆☆ | ★★★☆☆ | ★★★★★ |

| Multilingual | ★★★★☆ | ★★★★☆ | ★★★★★ | ★★★☆☆ |

| Safety | ★★★★☆ | ★★★★★ | ★★★★☆ | ★★★☆☆ |

Architectural Differences That Matter

1. Mixture of Experts (MoE)

Used by: GPT-4, Mixtral

How it works:

Multiple smaller "expert" models

Router network decides which experts to activate

Only activate relevant experts for each token

Advantages:

More efficient than single large model

Specialization (different experts for different domains)

Disadvantages:

More complex to train and deploy

Can be inconsistent

2. Constitutional AI

Used by: Claude series

How it works:

Model is trained with explicit "constitution" of principles

Self-critiques and revises responses

Trained to explain refusals

Result: Claude tends to be more careful, explicit about limitations

3. Retrieval-Enhanced Generation

Used by: Some specialized models, Perplexity AI

How it works:

Model can search external sources

Grounds responses in retrieved information

Provides citations

Advantage: Reduced hallucinations, up-to-date information

4. Multimodal Architecture

Native multimodal (Gemini):

Trained on text, images, audio, video simultaneously

Better cross-modal understanding

Adapter multimodal (GPT-4V):

Base model + vision adapter

Still very capable but different architecture

Choosing the Right Model: Decision Framework

| GPT-4 | Claude 3 Opus | Claude 3.5 Sonnet | GPT-3.5 Turbo | Gemini 1.5 Pro | LLaMA/Mistra |

| Maximum quality is critical | Long-document analysis (200K context) | Need strong coding assistance | High volume, cost matters | Need 1M token context | Data privacy is critical (self-host) |

| Complex reasoning required | Nuanced, thoughtful responses needed | Balance of cost and quality | Simpler tasks | Analyzing entire codebases/books | Need to fine-tune |

| Budget allows | Safety/ethics are paramount | Production applications | Speed is priority | Multimodal inputs | High volume (no per-token cost) |

| Tasks are high-stakes | You prefer more concise outputs | Large context helpful but not required | Good enough > perfect | Google ecosystem integration | Have ML infrastructure |

Model Performance on Common Tasks

| Tasks | Models |

| Code Generation | Claude 3.5 Sonnet (best),GPT-4,GPT-3.5 Turbo,LLaMA 2 70B |

| Creative Writing | Claude 3 Opus,GPT-4,Claude 3.5 Sonnet,GPT-3.5 Turbo |

| Factual Q&A | GPT-4,Claude 3 Opus,Gemini Ultra, Claude 3.5 Sonnet |

| Long-Document Analysis | Claude 3 Opus (200K), Gemini 1.5 Pro (1M), GPT-4 Turbo (128K), Claude 3.5 Sonnet (200K) |

| Cost per Quality | Claude 3.5 Sonnet, GPT-3.5 Turbo, Claude 3 Haiku, Gemini Pro |

| Reasoning & Logic | GPT-4, Claude 3 Opus, Claude 3.5 Sonnet, Gemini Ultra |

Part 6: Putting It All Together

Your Model Selection Worksheet

Answer these questions to choose the right model:

1. What's your primary task?

Simple Q&A → GPT-3.5 Turbo, Claude Haiku

Complex reasoning → GPT-4, Claude 3 Opus

Coding → Claude 3.5 Sonnet, GPT-4

Creative writing → Claude 3 Opus, GPT-4

Document analysis → Claude 3 Opus, Gemini 1.5 Pro

2. What's your context requirement?

< 8K tokens → Any model

8K-32K tokens → GPT-4, Claude, Gemini Pro

32K-200K tokens → Claude 3, GPT-4 Turbo

200K+ tokens → Gemini 1.5 Pro

3. What's your volume?

Low (< 1M tokens/month) → Use best quality

Medium (1M-10M) → Balance cost/quality

High (> 10M) → Cost-optimize or self-host

4. What's your budget per 1K tokens?

< $0.01 → GPT-3.5, Claude Haiku, self-host

$0.01-$0.05 → Claude 3.5 Sonnet, GPT-4 Turbo

$0.05 → GPT-4, Claude 3 Opus (when needed)

5. What's your latency requirement?

Real-time (<2s) → GPT-3.5 Turbo, Claude Haiku

Interactive (<5s) → Most models

Batch processing → Any model, optimize for cost

6. Do you need special capabilities?

Vision → GPT-4V, Gemini

Massive context → Gemini 1.5 Pro

Self-hosting → LLaMA, Mistral

Maximum safety → Claude 3

Practical Exercises

Exercise 1: Token Counting

Test these prompts in a tokenizer:

"Hello world"

"The quick brown fox jumps over the lazy dog"

Your name (if it's unusual, see how it tokenizes)

A sentence in another language you speak

A code snippet

What did you learn about tokenization?

Exercise 2: Temperature Experiments

Use the same prompt with different temperatures:

Prompt: "Write a product description for wireless headphones"

Try:

Temperature 0

Temperature 0.7

Temperature 1.2

Compare: Consistency, creativity, quality

Exercise 3: Context Window Testing

Take a long document (5,000+ words). Try:

Summarizing the whole thing

Asking about information at the beginning

Asking about information in the middle

Asking about information at the end

Notice: Where accuracy is highest/lowest

Exercise 4: Model Comparison

Same prompt, three different models:

Prompt: "Explain quantum entanglement to a high school student"

Test with:

GPT-3.5 Turbo

Claude 3.5 Sonnet

GPT-4 (if available)

Compare: Clarity, accuracy, style, length

Common Mistakes to Avoid

❌ Mistake 1: Ignoring tokenization

"I'll just write naturally and not worry about it"

Problem: Hitting context limits unexpectedly, wasting tokens

❌ Mistake 2: Using maximum context always

"I'll always use the longest context available"

Problem: Slower, more expensive, worse quality at high capacity

❌ Mistake 3: Default parameters for everything

"I'll just use temperature 0.7 for all tasks"

Problem: Suboptimal results—code generation needs lower, creative needs higher

❌ Mistake 4: Not testing different models

"GPT-4 is best, so I'll use it for everything"

Problem: Overpaying for simple tasks, missing specialized strengths

❌ Mistake 5: Assuming determinism at temp > 0

"I ran it once, so that's what it always does"

Problem: Inconsistent results surprise you in production

❌ Mistake 6: Exceeding context without checking

"I'll just paste this whole document"

Problem: Truncated results, missed information, wasted tokens

❌ Mistake 7: Treating all models the same

"They're all LLMs, so prompts should work identically"

Problem: Each model has quirks, optimal prompting differs

Key Takeaways: What You Must Remember

🔹 LLMs predict text, they don't understand it

This explains why they can be confidently wrong.

🔹 Tokens ≠ words

Budget and plan in tokens, not words.

🔹 Context windows are your constraint

Design prompts that fit. Put critical info at the start/end.

🔹 Temperature controls creativity

Low for facts, high for creativity.

🔹 Different models have different strengths

Choose based on task, not just "best overall."

🔹 Parameters matter as much as the prompt

The same prompt with different parameters produces different results.

🔹 Bigger isn't always better

A well-prompted smaller model beats a poorly-prompted larger one.

🔹 Context quality > context quantity

200K tokens of irrelevant information < 2K tokens of perfect context.

What's Next

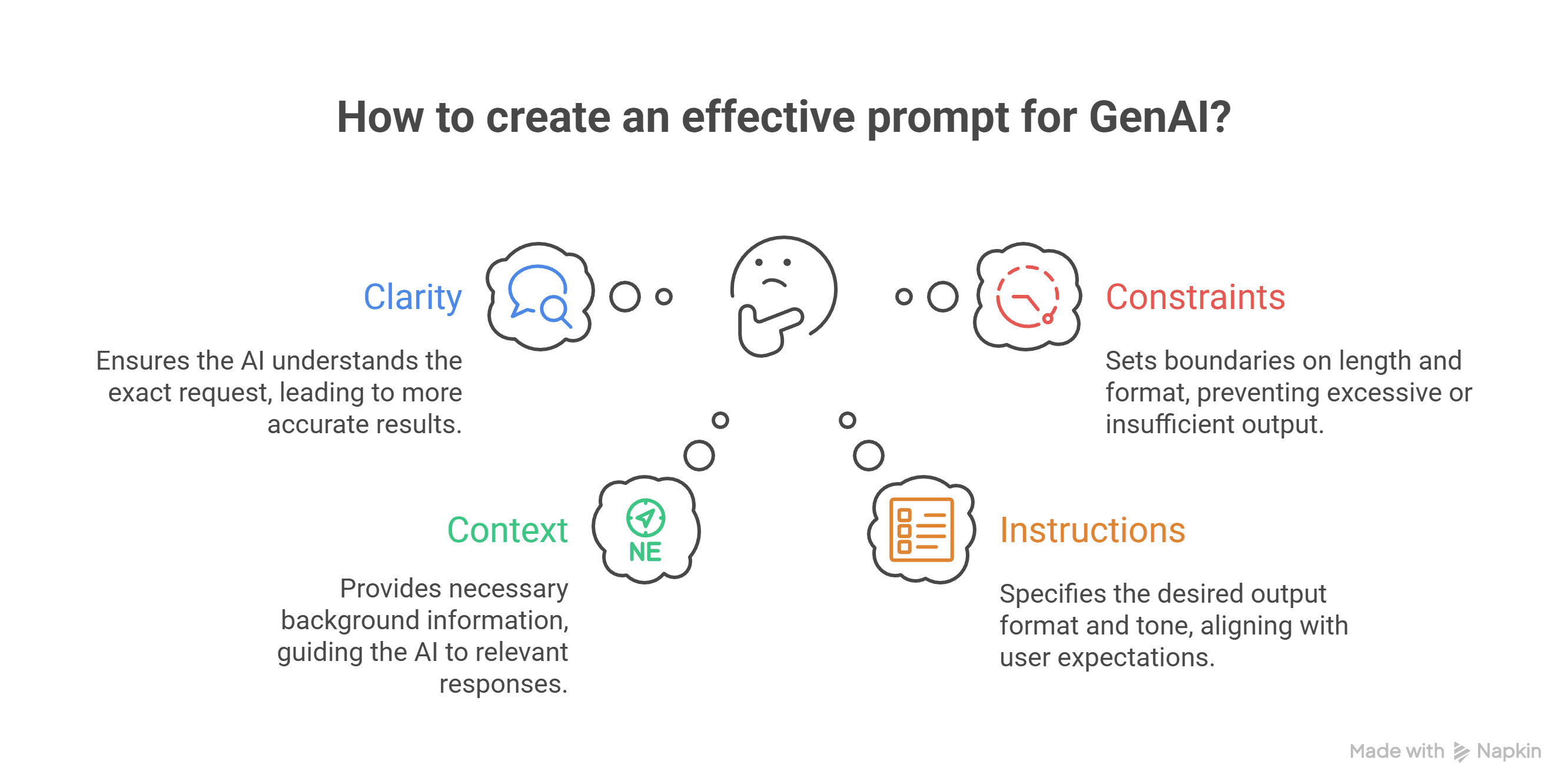

In Post #3: "Anatomy of an Effective Prompt", we'll take everything you've learned about how models work and translate it into practical prompt construction:

The essential components every prompt needs

Clear vs. vague instructions (with examples)

How to provide context effectively

Formatting outputs exactly as you want

Common beginner mistakes and how to avoid them

Your first prompt templates

Now you understand the engine. Next, you'll learn to drive it masterfully.

Resource Links

Tokenizers:

Model Documentation:

Research Papers:

"Attention is All You Need" (Transformers)

"Lost in the Middle" (Context window study)

"Constitutional AI" (Anthropic's approach)

Your Assignment Before Post #3

Experiment with at least two different models on the same task

Try the same prompt with different temperatures (0, 0.7, 1.2)

Check the token count of your typical prompts

Test a long document against context limits

Document your findings—what surprised you?

Share your discoveries in the comments! What worked? What didn't? What confused you?

Next up: Post #3 - "Anatomy of an Effective Prompt"

You now understand the machine. Time to master the interface.

Questions? Confused about anything? Drop a comment—I read and respond to all of them. This series only gets better with your input.

Welcome to Level 2 of prompt engineering mastery. You're building something powerful.