What is Prompt Engineering? A Complete Introduction

I am Developer, Artist and trying my luck on blogging as well. Well I am Ambitious, Passionate towards Learning, The Night Owl, And I Like challenges...

Welcome to the future of human-AI collaboration. If you're reading this in 2024-2025, you're witnessing a fundamental shift in how humans interact with machines—and prompt engineering is your passport to this new world.

The Definition: What Exactly IS Prompt Engineering?

Prompt engineering is the art and science of crafting instructions that guide artificial intelligence systems to produce desired outputs. It's the bridge between human intention and machine capability, the translator between what you want and what AI can deliver.

But let's be more precise:

Prompt Engineering (noun): The systematic process of designing, testing, and optimizing input instructions (prompts) to elicit specific, accurate, and useful responses from large language models (LLMs) and other AI systems.

Think of it this way:

If traditional programming is writing explicit code that tells a computer exactly what to do, step by step...

Prompt engineering is more like directing a highly intelligent but literal-minded assistant—you communicate your intent, provide context, and guide the AI toward the output you need.

The key difference? You're not writing in Python or Java. You're writing in natural language—English, Spanish, Chinese, or any human language. Yet the precision, testing, and optimization required rivals traditional programming.

Why This Matters More Than You Think

Here's a statement that might sound hyperbolic but isn't: Prompt engineering is becoming one of the most valuable skills of the 21st century.

Consider these facts:

1. The Productivity Multiplier

A skilled prompt engineer can accomplish in 10 minutes what might take hours or days manually

Companies report 30-80% productivity gains in tasks ranging from customer service to code generation

The same AI model produces vastly different results depending on how you prompt it

2. The Democratization of Expertise

You don't need a computer science degree

You don't need to understand neural network architectures

You DO need to understand how to communicate effectively with AI

3. The Economic Impact

Prompt engineers at top companies earn $175,000-$335,000+ annually

Every industry—from healthcare to entertainment—needs this skill

It's not replacing jobs; it's creating entirely new categories of work

The bottom line: In an AI-first world, your ability to communicate with AI systems is as fundamental as literacy itself.

A Brief History: How We Got Here

Understanding where prompt engineering came from helps you see where it's going.

Phase 1: The Command Line Era (1950s-2000s)

Human-computer interaction was rigid: You typed exact commands

COPY A:\FILE.TXT C:\BACKUP\worked.copy the filedidn't.Zero tolerance for ambiguity

Phase 2: Search and Keywords (1990s-2010s)

Google taught us to think in keywords

"best pizza near me" vs. "What is the highest-rated pizza restaurant within 2 miles?"

We learned to speak "search engine"

Phase 3: The Dawn of Neural Networks (2010-2017)

Deep learning models emerged but were specialized

Image recognition, speech-to-text, game-playing AI

Still not conversational

Phase 4: The Transformer Revolution (2017-2020)

June 2017: Google publishes "Attention is All You Need" (the Transformer paper)

June 2018: OpenAI releases GPT (Generative Pre-trained Transformer)

Breakthrough: Models could understand context across long passages

But here's the catch: Early users discovered that how you asked dramatically changed what you got

Phase 5: GPT-3 and the Birth of Prompt Engineering (2020-2022)

Summer 2020: OpenAI releases GPT-3 with 175 billion parameters

Researchers and early adopters noticed: Slight prompt variations → Wildly different outputs

The term "prompt engineering" gains traction

Key insight: These models respond to examples, structure, and specific phrasing

Example from early GPT-3 days:

❌ Bad Prompt: "Write about climate change"

(Result: Generic, unfocused text)

✅ Better Prompt: "You are a climate scientist writing for policymakers. Explain the three most urgent climate actions governments should take in 2024, with specific data and examples. Format as: Action | Rationale | Expected Impact"

(Result: Structured, authoritative, actionable)

Phase 6: The Prompt Engineering Explosion (2022-Present)

November 2022: ChatGPT launches and reaches 100M users in 2 months

Millions of people suddenly need to learn prompting

Key developments:

Chain-of-thought prompting (Wei et al., 2022)

Instruction-following models (InstructGPT, Claude)

Multimodal models (GPT-4V, Gemini)

Specialized prompting frameworks emerge

Phase 7: Professional Practice (2023-Present)

Companies hire dedicated prompt engineers

Academic research explodes (200+ papers in 2023 alone)

Tools and platforms emerge (LangChain, PromptBase, etc.)

Prompt engineering becomes a formal discipline

The trajectory is clear: We went from "type exact commands" → "think in keywords" → "architect precise instructions in natural language."

Why Prompt Engineering Matters in the AI Era

Let's get concrete about why this skill is essential right now.

1. The AI Capability Gap

The Problem: Modern AI can do amazing things—but only if you know how to ask.

Real Example:

Generic prompt: "Help me with marketing" → Vague, generic advice

Engineered prompt: "I'm launching a B2B SaaS product for healthcare compliance. Create a 90-day content marketing strategy targeting hospital CIOs, including: (1) content themes by week, (2) distribution channels with rationale, (3) KPIs to track, (4) budget allocation across channels. Present in table format." → Detailed, actionable strategy

The same AI. Dramatically different value.

2. The Quality-Cost Equation

Important Reality: AI API costs are based on tokens (roughly words)

Poor prompt: Uses 500 tokens, gets mediocre result, needs 3 follow-ups = 2,000 tokens total

Engineered prompt: Uses 200 tokens, gets excellent result on first try = 200 tokens total

That's a 10x efficiency difference. At scale, this means thousands or millions in savings.

3. The Accuracy-Safety Imperative

AI systems can:

Generate false information (hallucinations)

Exhibit biases

Miss crucial nuances

Produce inconsistent results

Prompt engineering mitigates these issues through:

Explicit constraints and guidelines

Request for citations and verification

Step-by-step reasoning (showing work)

Format specifications that enable validation

In high-stakes domains (healthcare, legal, financial), good prompting isn't optional—it's essential.

4. The Competitive Advantage

Here's the uncomfortable truth: Your competitors are using AI. The question is: Are they using it well?

Companies with strong prompt engineering capabilities:

Launch products faster (rapid prototyping with AI)

Scale operations efficiently (AI handles routine work)

Innovate constantly (AI as ideation partner)

Reduce operational costs (automation with accuracy)

The gap between companies that prompt well and those that don't will widen dramatically.

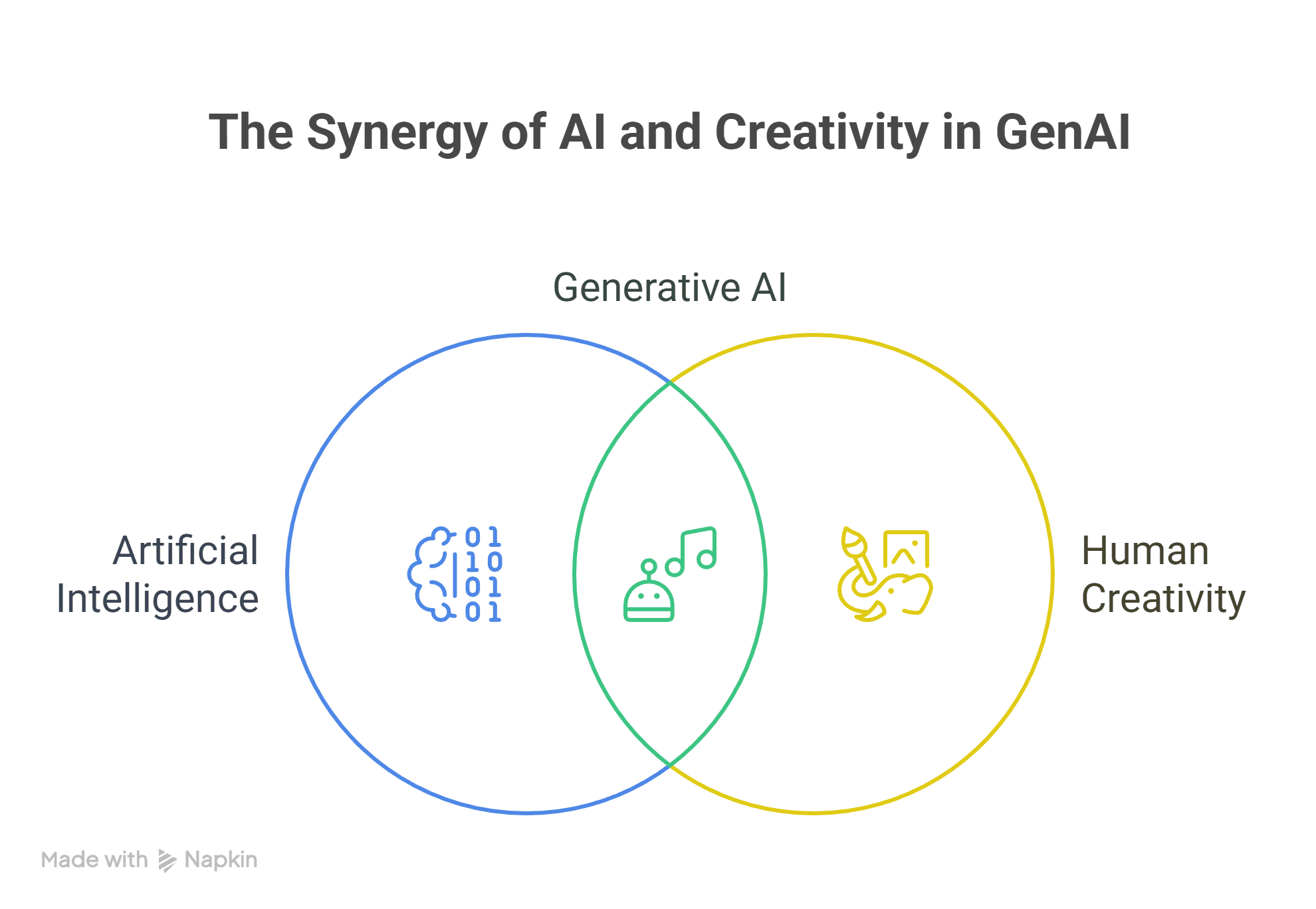

5. The Human-AI Collaboration Future

This isn't about replacement—it's about augmentation.

The future workplace has:

Humans: Strategy, creativity, empathy, judgment, domain expertise

AI: Pattern recognition, information synthesis, rapid generation, 24/7 availability

Prompt Engineering: The connector between the two

Your value isn't just what you know—it's how well you can leverage AI to amplify what you know.

Key Terminology Glossary

Let's build your foundational vocabulary. Master these terms—they're the language of prompt engineering.

Core Concepts

🔹 Prompt

The input instruction or query you provide to an AI model. Can be a question, command, or complex multi-part instruction.

- Example: "Explain quantum computing to a 10-year-old"

🔹 Completion / Response / Output

What the AI generates based on your prompt.

🔹 Token

The basic unit of text for LLMs. Roughly 1 token ≈ 4 characters or 0.75 words in English.

"Hello world!" = 3 tokens

Why it matters: Models have token limits; API costs are token-based

🔹 Context Window

The maximum amount of text (in tokens) a model can process at once—including both your prompt and its response.

GPT-4: 8K-128K tokens

Claude 3: Up to 200K tokens

Practical impact: Determines how much information you can include

🔹 Temperature

A setting (0.0 to 2.0) controlling randomness in AI responses:

Low (0-0.3): Focused, deterministic, consistent → Use for factual tasks

Medium (0.7-1.0): Balanced creativity → General use

High (1.0+): Creative, varied, unpredictable → Use for brainstorming

🔹 Top-p (Nucleus Sampling)

Alternative to temperature; controls diversity by sampling from top percentage of likely next tokens.

Low (0.1): Very focused

High (0.9): More diverse

Prompting Techniques

🔹 Zero-Shot Prompting

Asking the AI to perform a task without any examples.

- "Translate this to French: [text]"

🔹 Few-Shot Prompting

Providing examples in your prompt to guide the AI's response format and style.

UnknownExample 1: [input] → [output]

Example 2: [input] → [output]

Now do: [your input]

🔹 Chain-of-Thought (CoT)

Prompting the AI to show its reasoning step-by-step before answering.

"Let's solve this step by step:"

Dramatically improves reasoning accuracy

🔹 System Prompt / System Message

A special instruction that sets the AI's behavior for an entire conversation (not all models expose this).

- "You are a helpful Python programming tutor..."

🔹 Role Prompting

Assigning the AI a specific role or persona.

- "Act as a senior financial analyst..."

🔹 Prompt Chaining

Using the output of one prompt as input to another, breaking complex tasks into steps.

Model Behaviors

🔹 Hallucination

When an AI generates false information with confidence.

Critical to understand: AI doesn't "know" things; it predicts plausible text

Mitigation: Request citations, use RAG, verify outputs

🔹 Bias

Systematic errors reflecting biases in training data (gender, race, cultural, etc.)

- Your responsibility: Prompt carefully, validate outputs

🔹 Instruction Following

How well a model adheres to explicit directions in your prompt.

- Varies by model: GPT-4, Claude, and Gemini are specifically trained for this

🔹 Steerability

The degree to which you can control a model's output through prompting.

Advanced Concepts

🔹 Fine-Tuning

Training a model further on specific data (beyond prompting).

- When prompting isn't enough: Highly specialized domains, consistent formatting needs

🔹 Retrieval-Augmented Generation (RAG)

Combining LLMs with external knowledge sources (databases, documents).

- The solution to: Knowledge cutoffs, proprietary information, hallucinations

🔹 Embeddings

Numerical representations of text that capture semantic meaning.

- Used for: Semantic search, finding similar content, RAG systems

🔹 Tokens per Minute (TPM) / Requests per Minute (RPM)

Rate limits on API usage.

🔹 Latency

Time between sending a prompt and receiving the complete response.

Evaluation Metrics

🔹 Accuracy

How often outputs are factually correct.

🔹 Relevance

How well outputs address the actual query.

🔹 Coherence

How logically consistent and well-structured outputs are.

🔹 Fluency

How natural and grammatically correct the text is.

🔹 Consistency

How similar outputs are for repeated identical prompts (when temperature is low).

The Critical Mindset Shift

Before we go further, you need to understand something fundamental:

AI Doesn't "Understand"—It Predicts

This is crucial: When you prompt an LLM, you're not accessing a database of facts. You're activating a massive statistical model that predicts the most likely next word, then the next, then the next.

What this means:

✅ It can sound confident while being completely wrong

✅ The same prompt can yield different results (at higher temperatures)

✅ It has no real-time information (unless connected to search/APIs)

✅ It can't truly "think"—it generates plausible continuations

Why this matters for prompt engineering:

Your job is to set up the statistical probability space so that useful, accurate outputs are most likely. You do this through:

Clear instructions

Relevant context

Examples and patterns

Constraints and formats

Verification steps

Think of yourself as a conductor: The orchestra (AI) has incredible capability, but the quality of the symphony depends entirely on how you direct it.

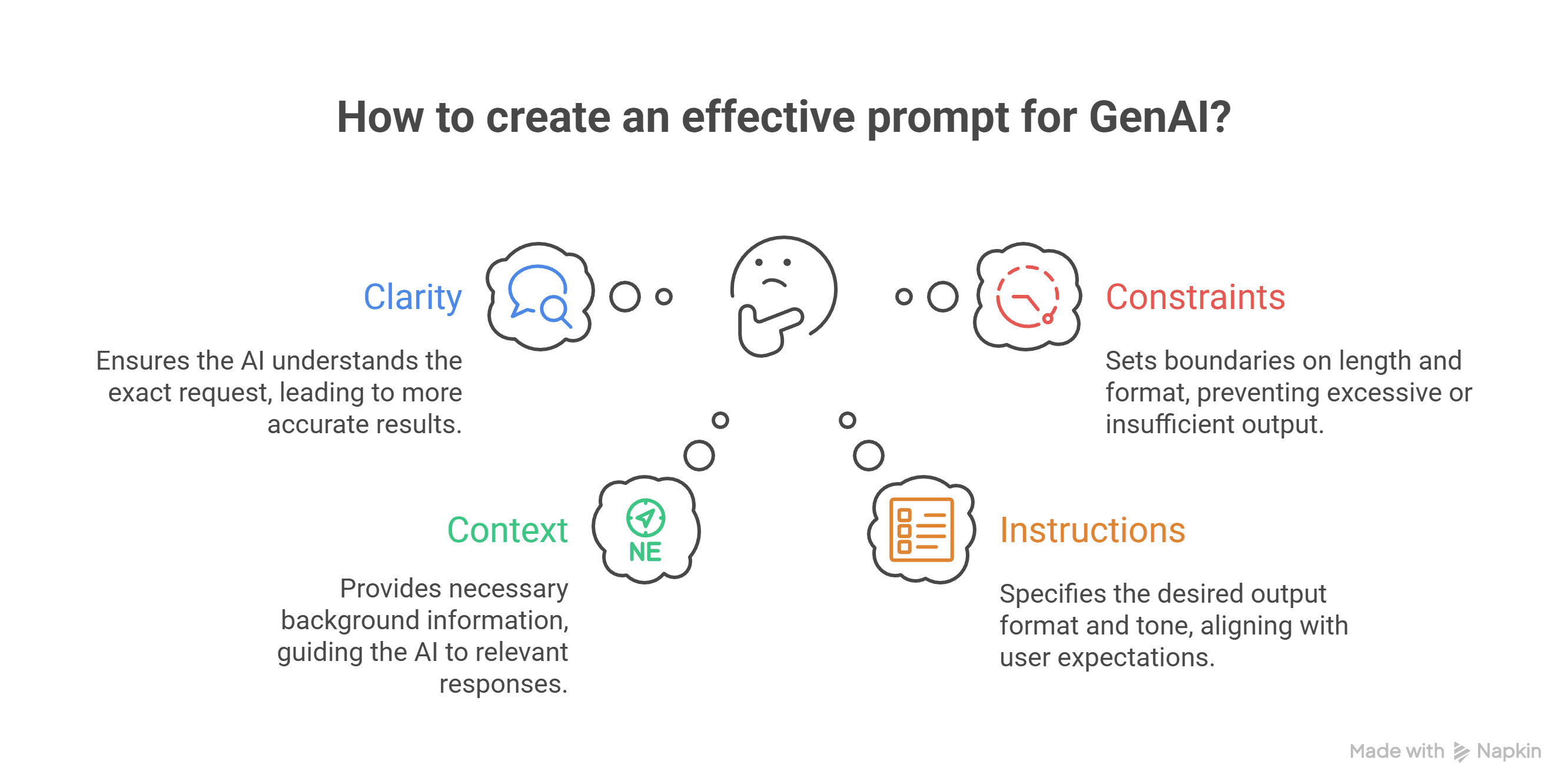

The Three Pillars of Effective Prompting

As you go through this series, everything traces back to these three principles:

1. Clarity

Ambiguous prompts = Unpredictable outputs

Compare:

❌ "Write about cars"

✅ "Write a 500-word article explaining the three main differences between hybrid and electric vehicles for consumers considering their next car purchase. Include cost, environmental impact, and practical considerations."

2. Context

Give the AI what it needs to understand your situation

Compare:

❌ "How should I respond?"

✅ "I'm a startup founder. A major client just asked for a 50% discount or they'll switch to a competitor. We'd lose $100K annually but can't afford the discount. Draft a diplomatic email response that: (1) acknowledges their concerns, (2) offers alternative value-adds instead of discounts, (3) keeps the relationship positive."

3. Constraints

Define the boundaries of acceptable outputs

Compare:

❌ "Give me marketing ideas"

✅ "Generate 5 marketing campaign ideas for a B2B cybersecurity product. Requirements: (1) Budget under $10K, (2) Focus on LinkedIn and industry conferences, (3) Target IT directors at mid-size healthcare companies, (4) Measurable within 60 days. Format: Campaign name | Tactic | Budget | Expected metric."

Master these three, and you're 80% of the way to effective prompting.

Your First Exercise

Let's make this practical immediately. Try this exercise:

Task: Get an AI to write you a professional email.

Attempt 1 (Poor prompt):

UnknownWrite an email

Attempt 2 (Better):

UnknownWrite a professional email declining a job offer

Attempt 3 (Good):

UnknownWrite a professional email declining a job offer. I'm declining because I accepted another position. Keep it brief, polite, and leave the door open for future opportunities.

Attempt 4 (Excellent):

UnknownContext: I interviewed for a Senior Product Manager role at TechCorp. They offered me the position, but I've accepted a role at a different company that aligns better with my career goals.

Task: Draft a professional email declining their offer.

Requirements:

- Tone: Grateful and professional, not apologetic

- Length: 3-4 short paragraphs

- Include: (1) gratitude for the offer, (2) clear decision to decline, (3) brief reason (accepted another opportunity), (4) expression of interest in staying connected

- Avoid: Over-explaining, leaving ambiguity about decision

Recipient: Jennifer Martinez, Hiring Manager

Notice the progression? Each version gives the AI more to work with—more clarity, context, and constraints.

Your assignment: Try all four versions with your AI of choice. Compare the outputs. Feel the difference.

Common Misconceptions to Abandon Now

❌ Misconception 1: "AI is magic—it should just know what I want"

✅ Reality: AI is powerful pattern matching. Garbage in = garbage out.

❌ Misconception 2: "Longer prompts are always better"

✅ Reality: Precision beats length. Concise, well-structured prompts often outperform verbose ones.

❌ Misconception 3: "Prompt engineering is just for technical people"

✅ Reality: It's a communication skill. Writers, marketers, and domain experts often excel.

❌ Misconception 4: "Once I find a good prompt, I'm done"

✅ Reality: Prompts need iteration, testing, and maintenance as models evolve.

❌ Misconception 5: "AI will make my job obsolete"

✅ Reality: People who use AI well will replace people who don't. The tool amplifies; it doesn't replace judgment.

The Ethical Dimension

Before we conclude, let's address the elephant in the room: With great prompting power comes great responsibility.

Key ethical considerations:

1. Transparency

Be clear when content is AI-generated

Don't misrepresent AI outputs as human work (where it matters)

2. Verification

Always fact-check AI outputs for important decisions

Don't blindly trust—AI makes mistakes

3. Bias Awareness

Recognize that AI inherits biases from training data

Test your prompts for potential biased outputs

Actively prompt for balanced perspectives

4. Privacy

Never input confidential, private, or sensitive information into public AI systems

Understand data retention policies

5. Attribution

When AI helps create something, consider appropriate attribution

Respect intellectual property laws (evolving rapidly)

6. Impact

Consider the downstream effects of scaled AI automation

Use the technology to augment human capability, not exploit vulnerabilities

We'll dedicate an entire post to this, but start thinking about it now.

What Makes a Prompt Engineer?

You might be wondering: "Am I cut out for this?"

Here's what actually predicts success:

✅ Curiosity: Willingness to experiment and iterate

✅ Clarity of Thought: Ability to articulate what you want precisely

✅ Domain Knowledge: Understanding the subject matter you're prompting about

✅ Pattern Recognition: Noticing what works and what doesn't

✅ Patience: Testing and refining until you get it right

Not required:

❌ Computer science degree

❌ Math expertise

❌ Programming background (though it helps)

The best prompt engineers I know come from diverse backgrounds: journalism, teaching, product management, psychology, law, creative writing.

The common thread? They're excellent communicators who think systematically.

Your Prompt Engineering Journey Starts Now

Here's what to do after reading this post:

Immediate Actions (Today):

Open an AI assistant (ChatGPT, Claude, Gemini, etc.)

Try this exercise:

Ask: "What is photosynthesis?"

Then ask: "Explain photosynthesis to three audiences: (1) a 5th grader, (2) a high school biology student, (3) a university botany professor. Use analogies for the 5th grader, technical accuracy for the student, and research-level detail for the professor."

Compare the results

Observe the difference: That's prompt engineering in action

This Week:

Experiment with 3-5 different tasks (writing, analysis, coding, etc.)

For each, compare a simple prompt vs. a detailed, well-structured prompt

Document what works and what doesn't

Start building your own prompt library

Before Next Post:

Choose one regular work task you perform

Draft a prompt that could help automate or improve it

Test and iterate on that prompt

Note: What worked? What didn't? What surprised you?

Looking Ahead: Post #2 Preview

In our next post, "Understanding Large Language Models: What You Need to Know," we'll pull back the curtain on how these systems actually work:

The transformer architecture (simplified, no math required)

Why tokenization matters more than you think

What "training data cutoff" really means

How different models compare (GPT vs. Claude vs. Gemini vs. open-source)

What parameters like temperature and top-p actually do

The practical limitations you need to work around

You don't need to become an ML engineer, but understanding the engine helps you drive better.

Final Thoughts

Prompt engineering is not a fad. It's not a temporary skill that will be automated away next year. It's the fundamental interface between human intelligence and artificial intelligence.

As models improve, prompt engineering becomes MORE important, not less. Better models can do more—but only if you know how to harness that capability.

This series is your comprehensive guide. We're going deep—deeper than any other resource available. By the time you finish all 42 posts, you'll have:

✅ Mastery of every major prompting technique

✅ A library of tested, reusable prompts

✅ Understanding of tools and frameworks

✅ Real-world application experience

✅ Professional-grade skills for your career

But here's the secret: You don't need to wait until the end. Every post will give you immediately applicable skills. Start using what you learn right away.

The future belongs to those who can collaborate effectively with AI. You've just taken the first step.

Join the Conversation

What's your biggest prompt engineering question? Drop it in the comments—I'm using reader questions to shape upcoming posts.

Share your first prompt experiment: Post a before/after example of improving a prompt. Let's learn together.

Subscribe for the series: Don't miss a post. New content every [your schedule].

Next up: Post #2 - "Understanding Large Language Models: What You Need to Know"

The journey to prompt engineering mastery starts with a single prompt. Make yours count.

Have you discovered a prompt pattern that works particularly well? Or hit a wall with something that should work but doesn't? Share your experience—this series is built on real-world practice, not just theory.

Welcome to the cutting edge. Let's build something remarkable together.